Binary Cross-Entropy Loss

Binary Cross-Entropy (BCE), also known as log loss, is a loss function used in binary or multi-label machine learning training.

It's nearly identical to Negative …

Binary Cross-Entropy (BCE), also known as log loss, is a loss function used in binary or multi-label machine learning training.

It's nearly identical to Negative …

Categorical Cross-Entropy Loss Function, also known as Softmax Loss, is a loss function used in multiclass classification model training. It applies the Softmax Activation Function …

The Softmax Activation Function converts a vector of numbers into a vector of probabilities that sum to 1. It's applied to a model's outputs (or …

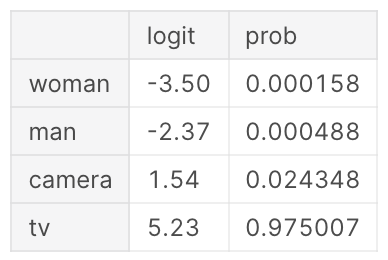

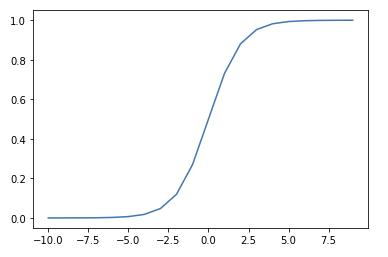

The Sigmoid function, also known as the Logistic Function, squeezes numbers into a probability-like range between 0 and 1.1 Used in Binary Classification model …

When data is provided to a model that is significantly different from what it was trained on, it's referred to as out-of-domain data.

Mean Absolute Error (MAE) is a metric for assessing regression predictions. Simply take the average of the absolute error between all labels and predictions in …